John Peter Flynn

📍 San Francisco, CA · ✉️

I’m a Senior Machine Learning Researcher and founding employee at Pipio, where my team and I build video editing tools powered by diffusion transformers. We just released a new paper called EditYourself.

I completed my MSc in Computer Science at the Technical University of Munich, where I wrote my thesis on graph neural networks under the supervision of Prof. Matthias Nießner in the Visual Computing and AI Lab. Prior to that, I studied at the University of California at Davis (go ags 🥳), where I earned a BS in Electrical Engineering and Computer Engineering.

I’m currently interested in video diffusion and 3D computer vision, with emerging interests in world models and AI safety. I’m particularly drawn to research that bridges digital models and physical reality.

Publications & Projects

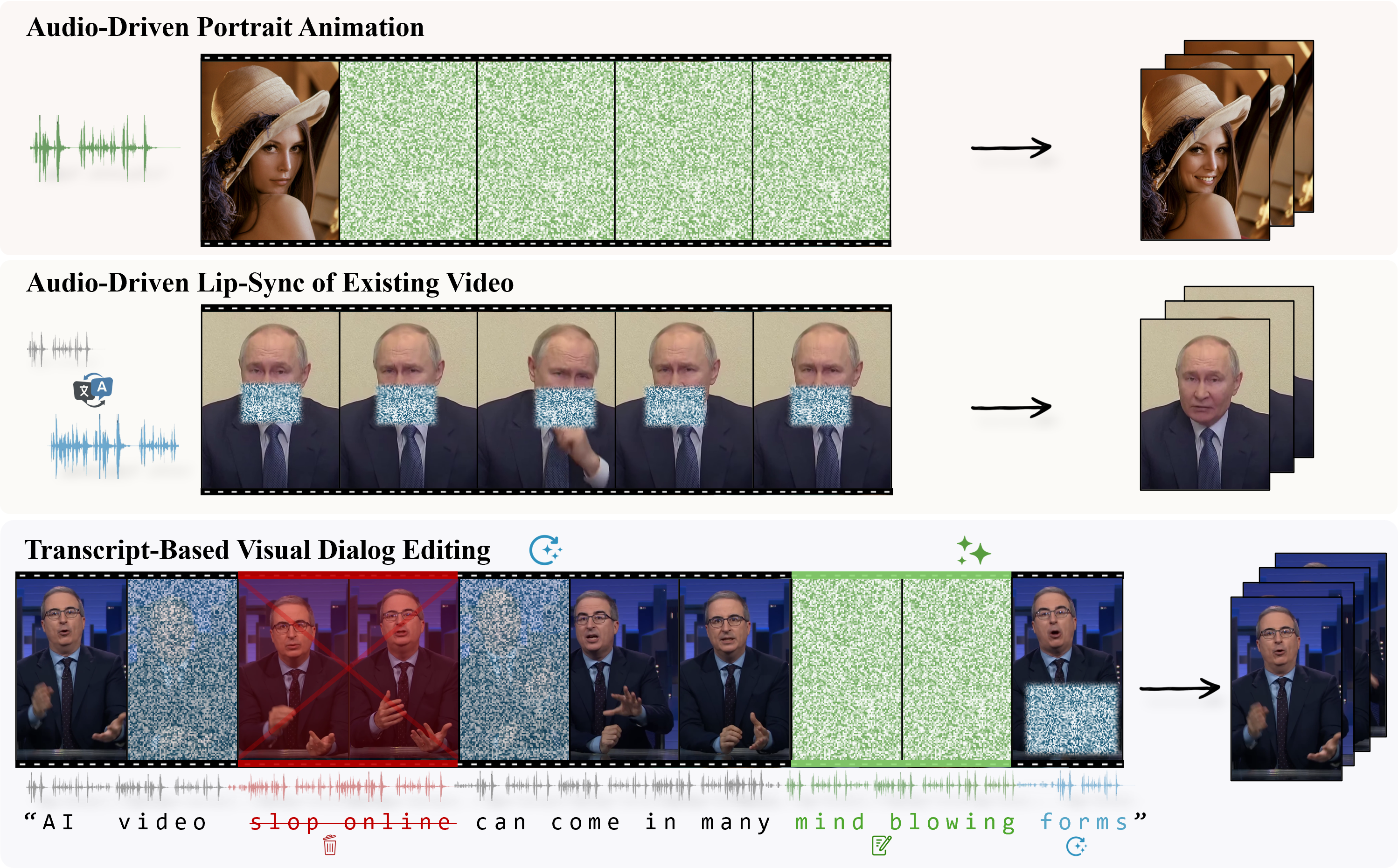

EditYourself: Audio-Driven Generation and Manipulation of Talking Head Videos with Diffusion Transformers

A diffusion-based video editing model for talking heads, enabling transcript-driven lip-syncing, insertion, removal and retiming of video while preserving identity and visual fidelity. Please refer to Ethical Considerations (Section 5.1) in our paper.

STINet: Surface Texture Inpainting Using Graph Neural Networks

Master’s thesis exploring graph neural networks for completing texture on partially-textured 3D meshes. Unlike traditional 2D image inpainting, STINet operates directly on mesh surfaces, leveraging vertex positions, normals, and connectivity to predict vertex colors. This represents the first application of GNNs to surface texture completion.

Stereo-Camera ORB-SLAM for Indoor 3D Reconstruction

Real-time stereo-camera SLAM system implementing sparse indirect feature correspondence methods with a covisibility graph for storing spatially-related keyframes and landmarks. Features tracking, local mapping, and loop closing threads for robust camera localization and 3D reconstruction.